This is Part 2 of a 3 part series. Read Part 1 here.

Data is a precious thing and will last longer than the systems themselves

Tim Berners-Lee, inventor of the world wide web

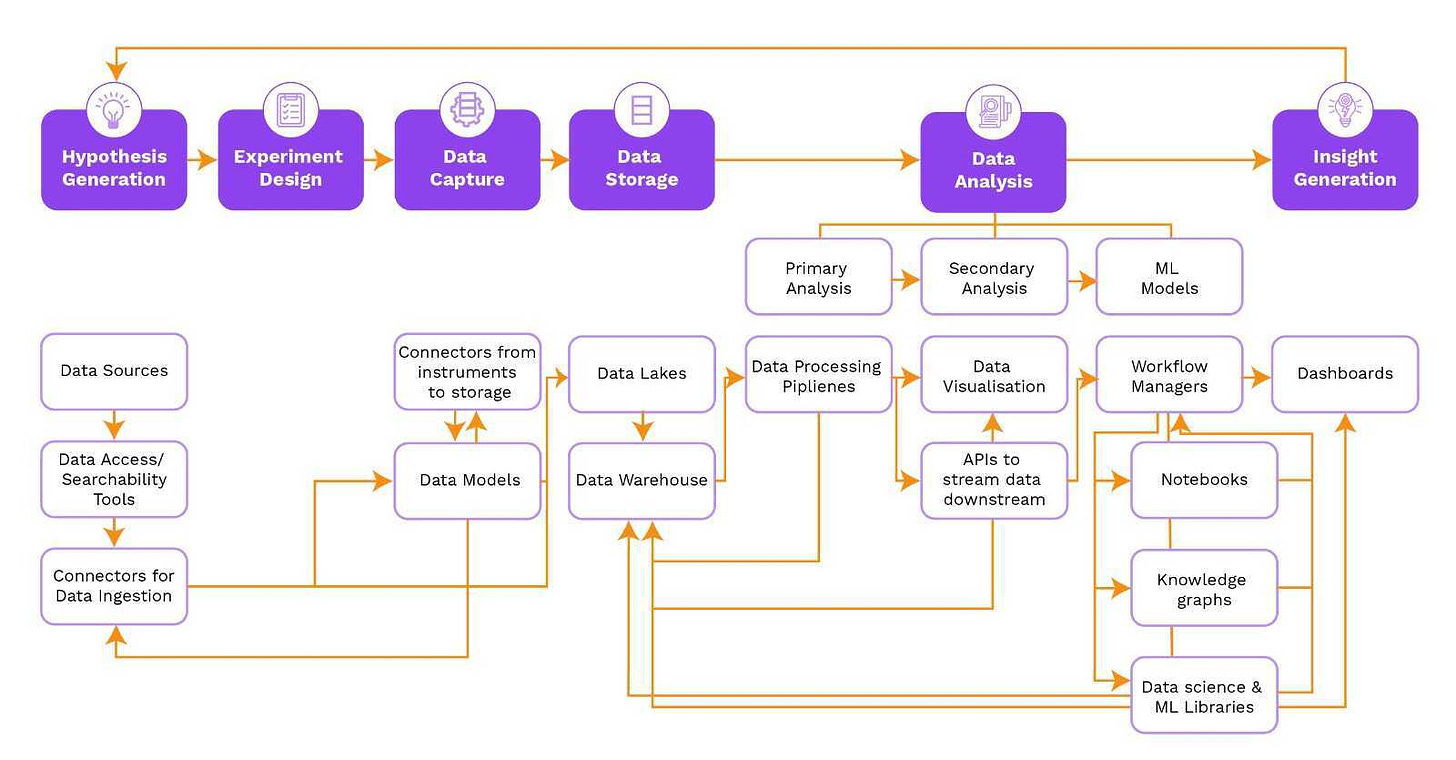

In our previous article, we explored the diverse data challenges researchers encounter across the six stages of preclinical research and development (R&D). Preclinical teams are constantly generating, storing, processing, and analyzing vast volumes of scientific data. This data must not only be securely stored but also be accessible to authorized personnel. However, due to the diversity of operational frameworks, many tools designed for large-scale data generation, experiment design, data management, and insight generation struggle with providing real-time analysis. This often results in inconsistencies in experimental parameters, metadata, and complex aggregations of replicates. To address these challenges, we need software tools that can support the processes and workflows of preclinical R&D researchers. In this article, we will discuss an ideal FAIR tech stack by mapping the technology to the problems faced by preclinical teams at each of the six stages.

Software segments for each stage of preclinical R&D

1. Hypothesis Generation

Literature & Knowledge Management Tools

Purpose - help researchers find, organize and annotate relevant scientific literature as well as other knowledge sources.

FAIR principles - makes the information findable and accessible.

Enabling ecosystem synergy - These tools must have robust search capabilities and the ability to visualize meaningful data. It’s especially crucial that they can integrate with other tools in the ecosystem. In particular, these tools should be linked to the downstream steps of data capture and data storage.

2. Experiment Design

Experimental Design Tools

Purpose - assist researchers in designing scientific experiments including aids such as: building platemaps, sample size calculations, randomization, control group assignments, statistical analysis and protocol documentation.

FAIR principles - supports reproducibility of experimental protocols.

Enabling ecosystem synergy - Experimental design tools should be connected to data capture and storage tools as well as data analysis tools. This information is required for later data analysis and interpretation and needs to be captured in a standardized manner.

3. Data Capture

Electronic Lab Notebooks (ELNs)

Purpose - ELNs provide a digital platform to record and organize experimental designs and data. They tend to focus on experimental protocols and unstructured data — what a scientist plans to do. Some ELNs also work on capturing and presenting data in a standardized format.

FAIR principles - facilitates finding experimental protocols and data

Enabling ecosystem synergy - ELNs go hand in hand with experimental design tools (and often incorporate those tools) and are usually tightly coupled with a LIMS.

Lab Information Management System (LIMS)

Purpose - register chemical and biological entities, track samples, and manage metadata. Integration with laboratory instruments and other tools such as ELNs helps researchers link their experimental data with their designs.

FAIR principles - enables interoperability of experimental data

Enabling ecosystem synergy - LIMS should be linked to ELNs and experimental design tools. They also work in conjunction with data storage tools to capture, store and organize experimental data.

4. Data Storage

Specialized Data Repositories

Purpose - provide a central location for storing and sharing biological and chemical data.

FAIR principles - empowers data-driven insights by advancing findability and reusability of preclinical data for analysis.

Enabling ecosystem synergy - These tools often provide the backbone for hypothesis generation, data analysis and insight generation tools. They ensure that research does not get lost and (hopefully) prevent scientists from spending time recreating existing knowledge.

Cloud Storage & Data Management Platforms

Purpose - used to store data when concerned with data scalability and security, data validation and automation, data lineage, and ease of sharing.

FAIR principles - enables findability and accessibility of data.

Enabling ecosystem synergy - cloud providers offer a number of solutions which must be integrated with data capture, data analysis and insight generation tools in order to store, share and analyze data in a synergistic manner.

5. Data Analysis

Data Analysis and Processing Tools

Purpose - empower researchers to interpret data by providing features such as bioinformatics algorithms, statistical analysis, and data cleaning.

FAIR principles - supports interoperability and reusability of preclinical data.

Enabling ecosystem synergy - These tools need to work with all upstream categories — pulling down the experimental design and results and then pushing insights back into data capture and storage systems.

6. Insight Generation

Visualization and Reporting Tools

Purpose - scientific reporting tools aid researchers in creating visualizations, reports and summaries of research findings.

FAIR principles - supports interoperability and reusability of research insights.

Enabling ecosystem synergy - these tools need to work with data analysis and storage tools in order to make the data available for actionable insights and reports.

All of these tools must operate together in unison, lest they slow down preclinical R&D. An estimated 18 hours is spent each week on avoidable data logistical tasks. Solving this is a daunting task for many biotech startups who just want to focus on making their science work. However, as we move towards a data-rich future, these tools and techniques will become ever more important.

Stay tuned for part 3 of this blog series where we’ll delve deeper into a landscape of existing software tools that help solve these problems!